Setup the Automation Controller ( AWX ) using Ansible

Categories:

Projects:

c2platform/ansible

,

c2platform.mw

,

c2platform.mgmt

,

ansible-execution-environment

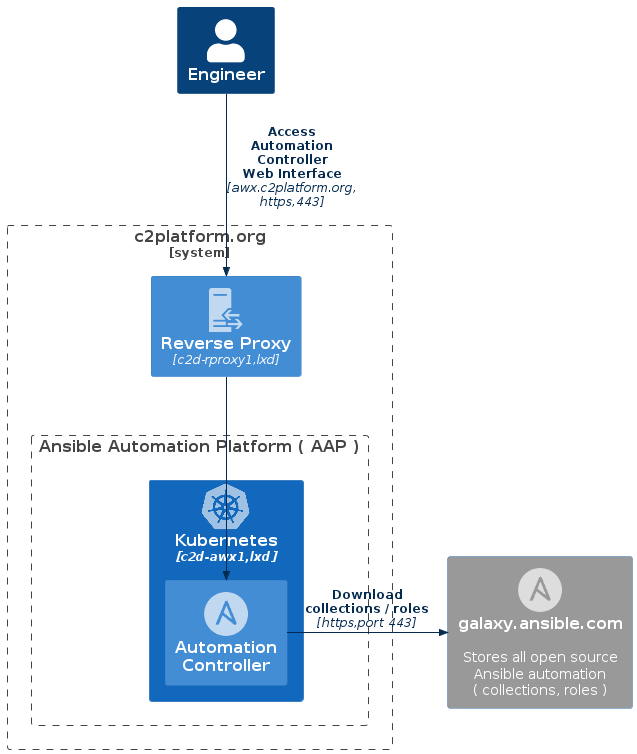

This guide provides step-by-step instructions on how to create an

AWX

instance on the c2d-awx1 node

using the AWX Operator Helm Chart

and

Ansible

. Here’s an overview of the process:

- Kubernetes Cluster Setup: Ansible takes the lead in configuring a

Kubernetes instance, leveraging the

microk8srole within thec2platform.mwcollection. - Kubernetes Configuration: With the Kubernetes cluster primed, Ansible

proceeds to configure it further, harnessing the

kubernetesrole from the samec2platform.mwcollection. - AWX Deployment: The deployment of AWX onto the Kubernetes cluster is orchestrated using the AWX Operator Helm Chart. Ansible seamlessly manages this deployment process.

- AWX Customization: To finalize the setup, the

c2platform.mgmtcollection’sawxrole is employed to tailor the AWX instance to the desired configuration.

Upon successful completion of these steps, you’ll gain access to the AWX

interface by visiting the following URL: https://awx.c2platform.org

.

Prerequisites

Create the reverse and forward proxy c2d-rproxy1.

c2

unset PLAY # ensure all plays run

vagrant up c2d-rproxy1

For more information about the various roles that c2d-rproxy1 performs in this project:

- Setup Reverse Proxy and CA server

- Setup SOCKS proxy

- Managing Server Certificates as a Certificate Authority

- Setup DNS for Kubernetes

Setup

To create the Kubernetes instance and deploy the AWX instance, execute the following command:

vagrant up c2d-awx1

Warning!

AWX may take some time to become available, which could potentially cause Ansible to fail during step 4, AWX Customization:. If you encounter a failure, please ensure that you can access the AWX web interface. Once you see the login screen, you can re-run the provisioning process with the following command:

vagrant provision c2d-awx1

Verify

To verify your AWX instance, follow these steps:

Connect

If you have kubectl installed on your host, you can also access the cluster

using that by running the following commands:

export BOX=c2d-awx1

sudo snap install kubectl --classic

vagrant ssh $BOX -c "microk8s config" > ~/.kube/config

kubectl get all --all-namespaces

Show me

NAMESPACE NAME READY STATUS RESTARTS AGE

kube-system pod/hostpath-provisioner-766849dd9d-jdd5b 1/1 Running 0 17m

kube-system pod/calico-node-vf72q 1/1 Running 0 17m

kube-system pod/coredns-d489fb88-8xpwb 1/1 Running 0 17m

kube-system pod/calico-kube-controllers-d8b9b6478-2dj4s 1/1 Running 0 17m

container-registry pod/registry-6674bf676f-twgpz 1/1 Running 0 17m

kube-system pod/dashboard-metrics-scraper-64bcc67c9c-5hbvz 1/1 Running 0 15m

kube-system pod/kubernetes-dashboard-74b66d7f9c-8hqgf 1/1 Running 0 15m

metallb-system pod/controller-56c4696b5-w2xbs 1/1 Running 0 15m

metallb-system pod/speaker-pkkpk 1/1 Running 0 15m

cert-manager pod/cert-manager-cainjector-7985fb445b-jdkk6 1/1 Running 0 15m

cert-manager pod/cert-manager-655bf9748f-6jxbg 1/1 Running 0 15m

kube-system pod/metrics-server-6b6844c455-7564v 1/1 Running 0 15m

cert-manager pod/cert-manager-webhook-6dc9656f89-br48w 1/1 Running 0 15m

awx pod/awx-operator-controller-manager-699d64766-ltpxh 2/2 Running 0 15m

awx pod/awx-postgres-15-0 1/1 Running 0 14m

awx pod/awx-web-6cb68b86cc-dp8tr 3/3 Running 0 14m

awx pod/awx-migration-24.4.0-cljp4 0/1 Completed 0 13m

awx pod/awx-task-5c96c47f86-r7vn7 4/4 Running 0 14m

NAMESPACE NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE

default service/kubernetes ClusterIP 10.152.183.1 <none> 443/TCP 17m

container-registry service/registry NodePort 10.152.183.38 <none> 5000:32000/TCP 17m

kube-system service/kube-dns ClusterIP 10.152.183.10 <none> 53/UDP,53/TCP,9153/TCP 17m

kube-system service/metrics-server ClusterIP 10.152.183.147 <none> 443/TCP 16m

kube-system service/kubernetes-dashboard ClusterIP 10.152.183.175 <none> 443/TCP 16m

kube-system service/dashboard-metrics-scraper ClusterIP 10.152.183.61 <none> 8000/TCP 16m

metallb-system service/webhook-service ClusterIP 10.152.183.124 <none> 443/TCP 16m

cert-manager service/cert-manager ClusterIP 10.152.183.51 <none> 9402/TCP 15m

cert-manager service/cert-manager-webhook ClusterIP 10.152.183.177 <none> 443/TCP 15m

kube-system service/dashboard-lb LoadBalancer 10.152.183.133 1.1.4.16 443:32189/TCP 15m

awx service/awx-operator-controller-manager-metrics-service ClusterIP 10.152.183.195 <none> 8443/TCP 15m

awx service/awx-postgres-15 ClusterIP None <none> 5432/TCP 14m

awx service/awx-service ClusterIP 10.152.183.88 <none> 80/TCP 14m

awx service/awx-service-lb LoadBalancer 10.152.183.29 1.1.4.17 8052:32477/TCP 11m

NAMESPACE NAME DESIRED CURRENT READY UP-TO-DATE AVAILABLE NODE SELECTOR AGE

kube-system daemonset.apps/calico-node 1 1 1 1 1 kubernetes.io/os=linux 17m

metallb-system daemonset.apps/speaker 1 1 1 1 1 kubernetes.io/os=linux 16m

NAMESPACE NAME READY UP-TO-DATE AVAILABLE AGE

kube-system deployment.apps/calico-kube-controllers 1/1 1 1 17m

kube-system deployment.apps/hostpath-provisioner 1/1 1 1 17m

kube-system deployment.apps/coredns 1/1 1 1 17m

container-registry deployment.apps/registry 1/1 1 1 17m

kube-system deployment.apps/dashboard-metrics-scraper 1/1 1 1 16m

kube-system deployment.apps/kubernetes-dashboard 1/1 1 1 16m

metallb-system deployment.apps/controller 1/1 1 1 16m

cert-manager deployment.apps/cert-manager-cainjector 1/1 1 1 15m

cert-manager deployment.apps/cert-manager 1/1 1 1 15m

kube-system deployment.apps/metrics-server 1/1 1 1 16m

cert-manager deployment.apps/cert-manager-webhook 1/1 1 1 15m

awx deployment.apps/awx-operator-controller-manager 1/1 1 1 15m

awx deployment.apps/awx-web 1/1 1 1 14m

awx deployment.apps/awx-task 1/1 1 1 14m

NAMESPACE NAME DESIRED CURRENT READY AGE

kube-system replicaset.apps/calico-kube-controllers-d8b9b6478 1 1 1 17m

kube-system replicaset.apps/hostpath-provisioner-766849dd9d 1 1 1 17m

kube-system replicaset.apps/coredns-d489fb88 1 1 1 17m

container-registry replicaset.apps/registry-6674bf676f 1 1 1 17m

kube-system replicaset.apps/dashboard-metrics-scraper-64bcc67c9c 1 1 1 15m

kube-system replicaset.apps/kubernetes-dashboard-74b66d7f9c 1 1 1 15m

metallb-system replicaset.apps/controller-56c4696b5 1 1 1 15m

cert-manager replicaset.apps/cert-manager-cainjector-7985fb445b 1 1 1 15m

cert-manager replicaset.apps/cert-manager-655bf9748f 1 1 1 15m

kube-system replicaset.apps/metrics-server-6b6844c455 1 1 1 15m

cert-manager replicaset.apps/cert-manager-webhook-6dc9656f89 1 1 1 15m

awx replicaset.apps/awx-operator-controller-manager-699d64766 1 1 1 15m

awx replicaset.apps/awx-web-6cb68b86cc 1 1 1 14m

awx replicaset.apps/awx-task-5c96c47f86 1 1 1 14m

NAMESPACE NAME READY AGE

awx statefulset.apps/awx-postgres-15 1/1 14m

NAMESPACE NAME COMPLETIONS DURATION AGE

awx job.batch/awx-migration-24.4.0 1/1 102s 13m

Refer to Connecting to a Kubernetes Cluster for more information.

Kubernetes Dashboard

- Access the Kubernetes Dashboard by visiting https://dashboard-awx.c2platform.org/

and log in using a token. Obtain the token by running the

kubectlcommand shown below inside thec2d-awx1node. - Once logged in, navigate to the awx

namespace. Check if the

awx-webandawx-taskpods are running without any errors.

kubectl -n kube-system describe secret microk8s-dashboard-token

For more information about obtaining the token and setting up the Kubernetes Dashboard, refer to the Setup the Kubernetes Dashboard guide.

AWX

- To access the AWX web interface, go to https://awx.c2platform.org

. Use the

adminaccount with the passwordsecretto log in. - Once logged in, navigate to the Templates section.

- From there, you can launch various plays, such as the reverse proxy play

c2-reverse-proxyor one of the AWX playsc2-awxorc2-awx-config.

AWX API

The AWX API is available. To verify:

Navigate to

https://awx.c2platform.org/api/. You should see API documentation.Test if you can login using following command

curl -k -d '{"username": "admin", "password": "secret"}' \ -H 'Content-Type: application/json' \ -X GET https://awx.c2platform.org/api/Show me

Review

To gain a better understanding of how the AWX instance is created using Ansible, you can review the following:

In Ansible playbook project

c2platform/ansible

:

Vagrantfile.yml: This file configures thec2d-awx1node with themgmt/awxplaybook. This file is read in theVagrantfile.hosts-dev.ini: The inventory file assigns thec2d-awx1node to theawx-newAnsible group andlocalhostto theawx_configAnsible group.

AWX Play

plays/mgmt/awx.yml: This playbook sets up Ansible roles for theawx-newAnsible group.group_vars/awx_new/main.yml: This file contains the main configuration for Kubernetes, including enabling add-ons likedashboardandmetallbload balancer.group_vars/awx_new/files.yml: Here, you can find the configuration for creating the AWX Helm Chart in the/home/vagrant/awx-values.ymlfile.group_vars/awx_new/kubernetes.yml: This file includes the configuration to set up the Kubernetes cluster and install AWX on it.group_vars/awx_new/awx.yml: This file includes the configuration to configure AWX. It will for setup an organization, credentials, an execution environment and a job template to provision the reverse proxy.

AWX Configuration Play

The AWX Configuration Play is responsible for managing AWX using AWX itself.

Its purpose is to be executed within the AWX environment. Unlike the standard

AWX Play

, this play employs fewer roles—specifically, it includes

only c2platform.core.secrets and c2platform.mgmt.awx. Its scope is limited

to configuring AWX, and it assumes the AWX instance has already been

established. This play serves as an illustrative example of how AWX can

autonomously manage its own setup.

plays/mgmt/awx_config.yml: This playbook establishes the Ansible roles required for theawx-configplay.group_vars/awx_config/main.yml: This file contains the configuration settings for AWX customization. It is a direct copy ofgroup_vars/awx_new/awx.yml. Note: In a real-world scenario, copying configuration code like this would not be recommended.

Within the Ansible collection project c2platform.mw :

- The

c2platform.mw.microk8sAnsible role. - The

c2platform.mw.kubernetesAnsible role.

Inside the Ansible collection project c2platform.mgmt :

- The

c2platform.mgmt.awxAnsible role.

AWX Configuration

Within the AWX instance via https://awx.c2platform.org

, you can

examine all components generated based on the configurations found in

group_vars/awx_new/awx.yml or group_vars/awx_config/main.yml. For instance:

- Job Templates like

c2-awx,c2-awx-config,c2-reverse-proxy. - Credentials such as

c2-machineandc2-vault. - The Project named

c2dand the Inventory namedc2.

Manual installation

If you prefer to perform the AWX installation manually, you can provision the

c2d-awx1 node without the c2platform.mw.kubernetes Ansible role. To do this,

disable or remove the c2platform.mw.kubernetes role in plays/mgmt/awx.yml.

By running the vagrant up c2d-awx1 command, an “empty” Kubernetes cluster will

be created. After that, you can follow the documentation to set up AWX.

Here are the relevant resources for the manual installation process:

Known Issues

Kustomize

By default, the AWX instance is created using Kustomize . Please refer to the ansible/awx-operator documentation for more details.

However, there is an issue when using a MicroK8s-based Kubernetes cluster. The

kubectl apply command will fail with the following message:

evalsymlink failure on ‘/home/vagrant/github.com/ansible/awx-operator/config/default?ref=2.2.1’

vagrant@c2d-awx1:~$ kubectl apply -k .

error: accumulating resources: accumulation err='accumulating resources from 'github.com/ansible/awx-operator/config/default?ref=2.2.1': evalsymlink failure on '/home/vagrant/github.com/ansible/awx-operator/config/default?ref=2.2.1' : lstat /home/vagrant/github.com: no such file or directory': git cmd = '/snap/microk8s/5392/usr/bin/git fetch --depth=1 origin 2.2.1': exit status 128

To reproduce this message, create a file /home/vagrant/kustomization.yaml with the contents shown below and run the command kubectl apply -k .:

vagrant@c2d-awx1:~$ cat kustomization.yaml

apiVersion: kustomize.config.k8s.io/v1beta1

images:

- name: quay.io/ansible/awx-operator

newTag: 2.2.1

kind: Kustomization

namespace: awx

resources:

- github.com/ansible/awx-operator/config/default?ref=2.2.1

As a result of the failure of Kustomize , the Helm Chart option was used instead.

Links

- ansible/awx-operator: An Ansible AWX operator for Kubernetes built with Operator SDK and Ansible.

- Ansible Documentation

- Red Hat Communities of Practice

- Ansible Community | Ansible documentation

Feedback

Was this page helpful?

Glad to hear it! Please tell us how we can improve.

Sorry to hear that. Please tell us how we can improve.